September 2021 Issue

Research Highlights

Recognition technology

Radar-based human recognition for self-driving cars

Self-driving car technology requires detectors capable of sensing a car’s environment, also in situations of limited visibility like bad weather conditions. Radar-based sensors have emerged as an essential component of driver assistance systems and self-driving vehicles, as they can robustly distinguish nearby pedestrians and other traffic-relevant objects. Apart from being applicable in bad weather, artificial recognition systems also need to be capable of dealing with so-called non-line-of-sight (NLOS) situations, when the line of sight between detector and object is obstructed. In traffic, NLOS situations occur when pedestrians are blocked from sight; for example, a child behind a parked car, about to run suddenly into the street. Now, Shouhei Kidera from the University of Electro-Communications and colleagues have developed a radar-based detection method for recognizing humans in NLOS situations. The scheme is based on reflection and diffraction signal analysis and machine-learning techniques.

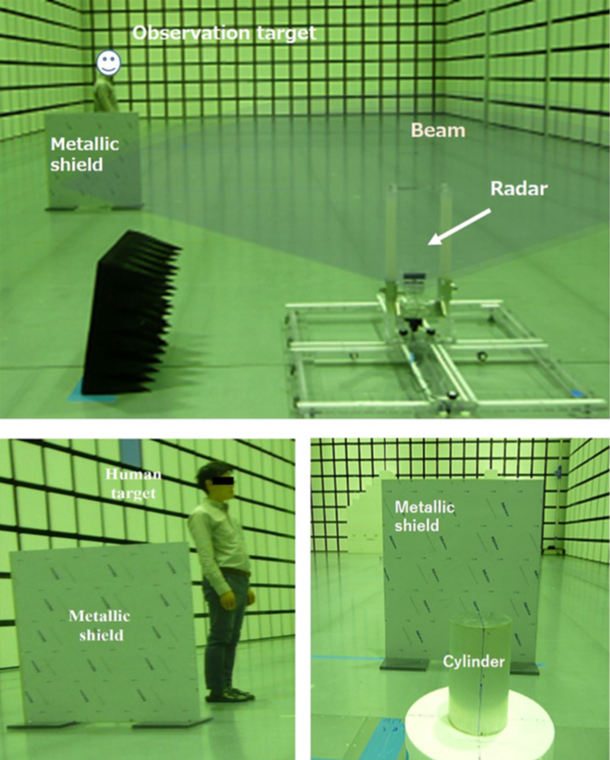

The researchers performed radar experiments in an anechoic chamber (a room completely absorbing reflections). The working principle of a radar is to send radio waves to a target object and then analyse the reflected waves (e.g. changes in frequency), which provides information about the object, such as its distance to the source.

Kidera and colleagues put a metallic plate in the chamber so that a NLOS situation arises when the target object is behind the plate from the radar’s point of view. The radar’s frequency was 24 GHz, and two target objects were used in the experiments: a 30 cm long metallic cylinder, and a human wearing light clothes. Three regimes were investigated: complete NLOS, partially NLOS (target object positioned at the border between the NLOS and the LOS zone) and complete LOS. The signals received by the detector were intrinsically different for the metallic cylinder and the human. Even if a human stands still, breathing and small movements related to posture control cause changes in the reflected wave signals. The scientists found that the differences are enhanced by diffraction effects: the ‘bending’ of waves around the edges of the metallic plate.

The researchers applied a machine-learning algorithm to the reflection and diffraction signals in order to let their sensing device learn the difference between a human and a non-human object. A recognition rate up to 80% was achieved. They also performed experiments with an actual car as the shielding object, which led to similar results and additional understanding of the dependence of the recognition success rate on the radar’s position relative to the target. Also, by carrying out additional experiments with a human performing a stepping motion, the scientists were able to recognize whether a human is standing still or walking, even in complete NLOS situations.

The results of Kidera and colleagues signify an important step forward to feasible self-driving car technology. Of course, in order to fully control realistic situations, more research is needed. Quoting the researchers: “… there should be further investigation using other classifiers or features, which is our important future work”.

Experimental setup used by Shouhei Kidera and colleagues.